I am fascinated by the psychology of scientific fraudsters. What drives these people? If you are smart enough to fake results, surely you have the ability to do research properly? You should also be clever enough to realise that one day you will get caught. And you should know that fabricating results is a worthless exercise that runs completely counter to the spirit of enquiry. Why would anyone pervert their science with fakery?

The reasons why some scientists succumb to corruption have no doubt also intrigued psychologists but of late you could be forgiven for suspecting them of being more preoccupied with committing fraud than analysing it. Psychology is not the only field of inquiry tarnished by incidents of dishonesty — let’s not forget physicist Jan Hendrik Schön, stem cell researcher Hwang Woo-suk or crystallographer HM Krishna Murthy — but its practitioners may be better placed than most to analyse the origins of the problem.

Indeed one of the most prominent recent transgressors has provided some useful insights. In 2011 Diederik Stapel, a professor of social psychology, was suspended from his job at Tilburg University because of suspected fraud; a subsequent investigation found that he had fabricated data over a number of years that affected over 55 of his publications. Interviewed in the New York Times by Yudhijit Bhattacharjee, the disgraced psychologist was candid about where he had gone wrong:

Stapel did not deny that his deceit was driven by ambition. But it was more complicated than that, he told me. He insisted that he loved social psychology but had been frustrated by the messiness of experimental data, which rarely led to clear conclusions. His lifelong obsession with elegance and order, he said, led him to concoct sexy results that journals found attractive. “It was a quest for aesthetics, for beauty — instead of the truth,” he said. He described his behavior as an addiction that drove him to carry out acts of increasingly daring fraud, like a junkie seeking a bigger and better high.

There’s a fair bit to unpack in those few lines. In part the problem is systemic. Stapel’s allusion to journals’ demands for ‘sexy results’ is a nod to one of the corrosive effects on researchers of the construction of journal hierarchies on the shifting and unreliable sands of impact factors. Stapel elaborates later on in the interview:

What the public didn’t realize, he said, was that academic science, too, was becoming a business. “There are scarce resources, you need grants, you need money, there is competition,” he said. “Normal people go to the edge to get that money. Science is of course about discovery, about digging to discover the truth. But it is also communication, persuasion, marketing. I am a salesman.”

Competition for finite resources is no bad thing, helping to ensure that grants and promotions are awarded to the applicants doing the highest quality science, but the process has been undermined by over-reliance on journal impact factors as a measure of achievement. A paper in a ‘top’ journal is now often seen as a more important goal than the publication of the very best science because busy reviewers rely too readily on the name of the journals the applicants’ papers are published in rather than the work that they report. Although ‘many normal people go to the edge’, it is clear that Stapel went well beyond it. At some point the self-promoting salesman overtook the discoverer of truth.

Unfortunately the issue of publication pressures leading to poor scientific practice is hardly news. A decade ago Peter Lawrence — always worth reading on the conduct of science and scientists — analysed the ‘politics of publication‘ and lamented that “when we give the journal priority over the science, we turn ourselves into philistines in our own world.” Lawrence’s gloomy prognosis has been borne out by Fang and Casadevall’s revelation that retraction rates are strongly correlated with impact factors. Stapel’s unmasking continues that sorry trend, one that will not be reversed until we can break our dependency on statistically dubious methods of assessment.

Problems of dubious practice (of varying degrees of severity) are more widespread than most realise but It is still true that most scientists live with the stress of competition without relinquishing their ethics. So what pushed Stapel over the edge? Good mentorship of junior scientists is recognised as a valuable corrective but the Dutch researcher’s training is not discussed in detail in the New York Times interview. He himself seems to think that it was the interaction of his personality traits with the highly tensioned system of publication and reward that led to impropriety. His “lifelong obsession with elegance and order” appears to have been at the root of his frustration with “the messiness of experimental data, which rarely led to clear conclusions”.

Stapel is hardly alone in his desire for elegance. Many scientists will have felt the deep satisfaction of conceiving a theory that brings a graceful simplicity to unruly data or of executing experiment that confirms a new hypothesis. There is an almost visceral pleasure in such instances of congruence, and aggravation in equal measure when experiment and theory collide abortively. Thomas Henry Huxley identified the tragedy of science more than a century ago — “the slaying of a beautiful hypothesis by an ugly fact” — but it was for him something you simply had to live with.

However, Huxley’s aphorism belies a more complex truth because science is a messy business and it is not always clear when a fact is truly ugly enough to bring down a hypothesis. The judgement can be a fine one and observations are sometimes set aside quite properly as part of plotting an intuitive path to a new insight; but the process is clouded by the degree of conviction that the scientist has in their cherished hypothesis, so the handling of inconvenient truths can shade into malpractice.

Crick and Watson were up-front about the need to discount some of the data that they worked with en route the structure of DNA — ‘some data was bound to be misleading if not plain wrong’, wrote Watson — but others have dissembled*. Mendel, Millikan and Eddington, for example, all discarded observations that famously conflicted with their respective conclusions on heredity, the charge on the electron and the veracity of Einstein’s general theory of relativity (but see update below with regard to Eddington). As Michael Brooks has pointed out in Free Radicals, his entertaining book on rule-breaking researchers, these renowned scientists may have been vindicated by history but their shady practices were hardly justifiable at the time. Stapel’s misdemeanours of fabricating data to support his hypotheses are more extreme — he also loses out also because his theories of psychological priming have been undermined by his unmasking — but nevertheless lie on a continuum of fraudulent practice with his scientific forebears. They all share the belief that they were right.

Even so, I can’t quite get the measure of Stapel’s behaviour. Perhaps the success that flowed from his synthetic results, given the seal of approval by peer reviewers and editors when published in prestigious journals, validated an approach that he must have known was scientifically dubious. The New York Times interview conveys a sense of regret now that he has been found out — a regret sharpened by the reaction of his wife, children and parents, forced to look anew at a man they knew so well — but why did he never question himself during the years of fabrication?

In my mind I keep returning to Stapel’s dissatisfaction with the untidiness of experimental data. I think that might be because I have just published one of the messiest papers ever to come out of my lab and am rather pleased with it for precisely that reason. I offer this story as a counter-anecdote to the case of the errant psychologist, not as a holier-than-thou pose, but simply to give a sense of what it feels like to wrestle with real data.

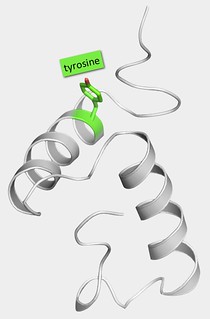

Our paper reports the structure of a norovirus protein called VPg. Though long supposed to be ‘intrinsically disordered’, our work shows that the central portion of VPg’s chain of amino acids folds up into a compact structure consisting of two helices packed tightly against one another; the two ends of the protein remain flexible. It’s nice to confound the prevailing viewpoint on VPg but that’s not the interesting bit about our new results.

The VPg protein — a pair of nicely packed helices

The interesting bit is that our structure doesn’t make sense. Not yet at any rate. Usually, working out the structure of a protein is an enormously helpful step towards figuring out how it works but that’s not the case with VPg. Our structure is a bit baffling.

The protein plays a key role in virus replication, the process of reprogramming infected cells to make the components — proteins and copies of the viral RNA genome — needed to assemble thousands of new virus particles. That’s what infection is all about, at least as far as the virus is concerned (though the infected host often has a different perspective).

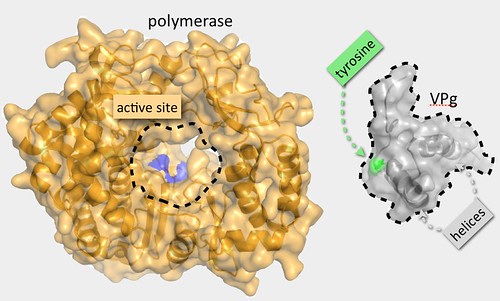

VPg acts as seed point for the initiation of the synthesis of new viral RNA genomes. To do this it is bound by the viral polymerase, an enzyme or nanomachine that catalyses the chemical attachment of an RNA base to a specific point — a tyrosine side chain — on the surface of protein. In turn this RNA base becomes the point of attachment for the next one and so on until the whole RNA chain — all 7500 bases — is complete.

From our structure we can see that the tyrosine anchor point on VPg lies on the first helix of the core structure but the problem is that the core is too big to fit into the cavity within the polymerase where the chemistry of RNA attachment occurs. So at first sight, VPg appears to have a structure that interferes with one of its most important functions. To solve this apparent contradiction, we came up with what I thought was a rather lovely hypothesis: we guessed that the VPg structure has to unfold to interact properly with the polymerase, supposing there might be just enough room for a single helix to get into the active site but not a tightly associated pair.

VPg: too bloody big to fit in the polymerase active site!

We tested this idea by mutating our VPg to introduce amino acids changes that would destabilise its core structure, reasoning that this would make it easier for the polymerase to grab on to the protein, so increasing the rate at which it could add RNA bases. But although the changes made disrupted the protein structure, they almost invariably also reduced the efficiency of the polymerase reaction. The experiment succeeded only in generating an ugly fact to disfigure our hypothesis.

Except it’s not dead yet — not to me. I can make excuses. The method we used to measure the rate of addition of RNA to VPg by the polymerase was less than optimal. We couldn’t work with purified components in a test tube, and so had to monitor the reaction inside living cells using an indirect readout for elongation of the RNA chain. It remains possible that this assay is confounded by the effects of other molecules in the cell. Plus, we haven’t yet been able to analyse the structure of the viral polymerase with VPg bound to it — caught in the act of adding RNA bases. Like Thomas, until I can really see evidence that conflicts with my supposition, I’m not ready to give up on the hypothesis that VPg has to unfold to interact properly with the polymerase.

But it could take quite a while to develop the reagents and the techniques to do these more probing experiments and since we had already spent quite a number of years getting to this point, we wanted to publish the results. The story we had to tell in the paper in unfinished. To some eyes it might look like a bit of a mess and I was certainly concerned that the reviewers of the Journal of Virology, where we eventually submitted the manuscript for publication, might insist that we go back to the lab to get the data to fill in the gaps. We had an interesting new structure to report but our experimental analyses had only managed to confirm that we don’t yet know what the structure is for. We were asked some searching questions and the manuscript was improved by the subsequent editing but happily the reviewers — and the editor — still understood that progress in science is more often made in small steps than in giant leaps.

We haven’t tied off the whole story of how VPg works in norovirus RNA replication but that’s OK. Now that we have given an honest account of our puzzling structure, others can also apply their minds to the problem. Indeed the publication has already sparked a couple of interesting email exchanges. The situation might still be messy but it’s far from messed up.

Update, May 12: As pointed out by Cormac in two comments below and by Peter Coles on twitter (see my reply below), there appear to be strong arguments for not including Eddington in this list of dissemblers. It is ironic perhaps that a blog on messiness in science should itself become rather messy but I prefer to think it merely shows the value of open discussion.

*Of course, Crick and Watson famously benefitted from not entirely proper access to Franklin’s and Gosling’s X-ray diffraction images of DNA.

The one thing that nobody seems to mention about Stapel is laziness. Doing real social psychology research takes effort; you have to persuade people to do the studies and spend the time testing each participant. In my own field, what often grinds me down are trivial aspects of the logistics of gathering the data. It would be much easier to sit at my desk and generate the numbers. I’d forget about the ‘quest for perfection’ argument – I reckon Stapel didn’t gather the data because he couldn’t be arsed.

“I’d forget about the ‘quest for perfection’ argument — I reckon Stapel didn’t gather the data because he couldn’t be arsed.”

I have to admit that my intuition, too, was that Stapel’s “lifelong obsession with elegance and order” is a bit of post-hoc rationalisation to persuade himself that what he did was excusable, or at least nobly motivated. Laziness, ambition, aesthetics? I doubt the last was really the major factor.

I take the point about laziness being an overlooked factor but I don’t think the aesthetics argument can be so easily discounted, particularly since a nice tidy story backed by a nice tidy set of data can sell well to journals.

“… since a nice tidy story backed by a nice tidy set of data can sell well to journals.”

Then that would fall more under the “ambition” heading than “aesthetics”.

Well yes – my point is merely that they are entangled.

True enough. Thanks to the journals that ambitious people want to be in having their own preference for neat and tidy data. I do agree (of course) that that is the fundamental problem.

An excellent post, Stephen, and one I’ll be recommending all early-career researchers read. Congratulations too for having “just published one of the messiest papers ever to come out of my lab” and using that as an example to show “what it feels like to wrestle with real data”.

I’m also fascinated by so-called “unconscious fraud”, whereby scientists fiddle results without really being aware they’re doing anything wrong – discarding datapoints that don’t fit the curve, for example, and rationalizing that activity away. The lab lit novel INTUITION by Allegra Goodman has a great illustration of unconscious fraud in action.

I’d like to point out that is is Academe itself who has created the pressures discussed here and misused the Impact Factor, not “journals” which Mike Taylor seems to imply in his response. I think the author makes this clear, but some have a knee jerk reaction to blame all scholarly publishing ills on journals/publishers. Regardless of where the pressure comes from, those who engage in unethical practices have no one to blame but themselves. That lying and cheating are wrong is something my children know. I’d hope we expect the same from our researchers.

I agree it’s a largely self-inflicted wound — the other great tragedy of science? Journals have in fact helped to publicise the problem — I cited several pieces from Nature and other journals in my piece — but they are guilty to some degree of playing along with the impact factor game. I would like to see more of them take Nature Materials’ lead and publish warnings about the mis-use of impact factors. But at the end of the day it is down to the scientific community to put its house in order if we want our colleagues to behave well.

Just for the record, I do agree. Evaluation by journal rather than by quality exacerbates the problem, but there is a more fundamental issue of what we mean by “quality”, and evaluating that by how nice the result is rather than how good the work was.

I take issue with the several rather sly digs you make at journals – as if saying that if journals and impact factors didn’t exist, there would be less temptation for scientists to be fraudulent. This is like saying that poverty is a cause of crime. It might be a contributing factor, but it wasn’t poverty that was the proximate cause of Joe Bloggs mugging an old lady in the street. Joe Bloggs could have chosen not to.

Your problem, if I may say so, is perspective. You aren’t a journal editor. It’s when results look too cosy that we editors are most sceptical. We journal editors are increasingly worried about such things as plagiarism, fraud and reproducibility of results, even though we aren’t equipped to be policemen. If you have ever been involved in the retraction of a paper – well, it’s a horrible, horrible experience.

I get the impression that many scientists use journals as a convenient scapegoat when they should be looking closer to home and taking more responsibility for their own actions. Perhaps if scientists knew more about what goes on inside journals they’d be less eager to lay the blame at our door.

Sly digs, Henry? I don’t see that in the piece. I certainly mentioned impact factors but the post makes clear that the main problem with them is their mis-use by reviewers — i.e. the research community. Plus, I acknowledged that most scientists behave well. I kept returning to the question of why Stapel erred when so many others have not. Ultimately it is his responsibility but, as others have also written, there are systemic issues to be considered.

That said, I’m sure it would be healthy for more scientists to be aware of the work that goes on inside journals and know that you yourself certainly make efforts in this regard.

Reminds me of the expression – that the soundtrack to discovery is not “eureka!” but “Oh… that’s odd”

I was influenced by what I first read 20 years ago in Max Perutz’s book “Is science necessary?” when he was discussing what Peter Medawar had said – (approximately) that it’s OK to interpret data wrongly, but it’s unforgivable to publish data you could not reproduce. If the data is OK, the field can eventually figure it out, but if the data is wrong it hurts the field. It’s better to have ugly data out there, so that the field as a whole can figure it out eventually.

I don’t think you’re right on Eddington. That story began with a sociological study in the 1970s, a study that has been roundly debunked by historians of physics. Sadly, the mud has stuck fast (email me if you want a reference on this).

Re motivation, I think it’s quite clear; all the talk of beauty and elegance suggests he was quite sure what the answer was, and the acquiring of supporting data became more and more of a tedious box-ticking exercise. This is a very common motivation in scientific fraud, apparently, because the perpetrator doesn’t see it as true fraud, more “end justifues the means”.

Cormac

Interesting – I got the story (that Eddington discarded observations that didn’t fit with Einstein’s prediction of the gravitational effect on light) from Michael Brooks’ book ‘Free Radicals’. If this account has been debunked would certainly like to see the reference.

I’ve discussed the fallaciousness of the accusation against the Eddington group for discounting an alleged anomaly for GTR. The assumptions required for the statistical analysis could scarcely be maintained due to the well-known and blatant effects of distortions of the mirror by the sun, and if the assumptions are granted, there is no anomaly. I discuss this in Mayo 1996 (Error and the growth of Experimental knowledge), accessible off the publications on my blog, but I’ve also seen the accusation debunked by re-analysis. With that said, none of the 1919 eclipse results were at all accurate. That required radioastronomical results years later.

Great post. If I recall correctly, the inventor of impact factors, Eugene Garfield put a “Health Warning” on them. We have forgotten this to our cost.

Journals cannot be blameless, they are pat of the problem. One only has to look at the incredibly high level of non reproducible science that is published.

Laziness is an issue, particularly if you enjoy certain aspects of life more than others. Stapel doubtless enjoyed the travel and fed off the status, “fame” and fawning. He wasn’t someone who enjoyed a hard slog or trying to put together a jigsaw without a picture, missing half the pieces and with 10-fold more pieces from an assortment of other puzzles. So in some senses a freeloader. Simply an individual who at some point, or indeed from the outset, decided here was a nice easy life.

A possible telling question: if you were transferred to flipping hamburgers in McDs tomorrow, what would you do in your spare time? Amateur scientist or something else?

Hi Stephen, great blog.

The best source on this I have come across is by the physicist Daniel Kennefick; see

http://astro.berkeley.edu/~kalas/labs/documents/kennefick_phystoday_09.pdf

Essentially, Kennefick’s point is that the accusation of bias stems from a 1970s study by the sociologists Earman and Glymor, later made famous by Collins and Pinch (see refs in the paper). While the sociological study was strong on considerations such as Eddington’s belief in relativity, it paid very little attention to the raw data itself. For example, the sociologists insist that the results from the 2nd expedition were dropped because they didn’t match Einstein’s predictions, while in fact Eddington and Dyson made it clear at the time that they were dropped because of systematic experimental errors in the measurements of the 2nd expedition. Another interesting finding is that it was the expedition of Dyson, not Eddington, that provided the crucial data, which doesn’t really fit the bias thesis since Dyson was a relativity skeptic!

I think Kennefick’s paper is very fair and balanced and it is highly regarded in the astronomical community. By contrast, many physicists suspect that the sociological study is an example where sociologists and non-scientist historians have reached a conclusion without really understanding the raw data. It seems their study was then further simplified by many others such as Collins and Pinch. In any case, it seems to me that there is a problem with the study even from a sociological point of view,; both Eddington and Dyson had established formidable reputations as extremely careful experimentalists of world stature – they were hardly going to throw it all away on the word of some German bloke!

Finally, for them to be included as ‘fraudsters’ is the end of a very long process that has got further and further away from the raw data!

Regards, Cormac

Cormac – many thanks for your comment and posting Kenndfick’s article. That provides a valuable corrective.

In the meantime Peter Coles also pointed out on Twitter that my post is unfair on Eddington and directed me to his original paper. I can’t pretend to have read or followed every word of the argument but it nevertheless seems clear that all their experimental observations are considered and the reasons for discounting particular measurements are discussed openly. It rather looks as if Eddington and his co-workers did a decent job of grappling honestly with data that were less than optimal. I seen no hint of fabrication or dissembling that would put him in a gallery of rogues so will update the post accordingly.

Coles’ own paper on the subject provides more historical context and a useful discussion on the handling of errors in the measurements (I’ve also ordered his book).

Really interesting post, Stephen, and it’s good to revisit some of the rehabilitations of Eddington: Peter Coles’ paper is very interesting, as is one from Daniel Kennefick, referenced in this Phil Ball article on the Nature site.

I think it is almost impossible to know what Eddington’s motivation was; surely every scientist has mixed motives? Eddington felt Einstein was right, and that it was important he was shown publicly to be right. He also knew it was important to do the science convincingly; he was well aware that the test of a scientific observation is in the reaction of one’s colleagues – the result must be convincing to a high proportion of qualified scientists (who all come with their own prejudices – many of which would have been slanted against Einstein).

We all know data can be sliced in many ways, and hindsight is a particularly valuable tool for analysis! That is, after all, why scientists endeavour to specify in advance of seeing the data what they are going to do with it. There is a leaning amongst astrophysicists to give Eddington the benefit of the doubt and assume he didn’t do anything he shouldn’t have, just as there is a tendency for social scientists to want to see something that perhaps isn’t there.

For my part, I’d be more convinced of Eddington’s purity if we hadn’t learned of his abominable treatment of Chandrasekhar a few years later. Clearly, Eddington was a man with issues. I also think the contemporary ambivalence is somewhat telling about the level of conviction people had about Eddington’s data. As Coles says, “[T]he skepticism seems in retrospect to be entirely justified”. The fact that the Nobel Prize Committee were also sceptical is notable: they wrote to Einstein, awarding his Nobel, “in consideration of your work on theoretical physics and in particular for your discovery of the law of the photoelectric effect, but without taking into account the value which will be accorded your relativity and gravitation theories after these are confirmed in the future”.

As far forward as 1923, one commenter wrote that people weren’t clear on how or why Eddington had given “almost negligible weight” to the Sobral plates: the notion that the eclipse results found in favour of Einstein was baffling “the logic of the situation does not seem entirely clear.” (sorry, my source for that is packed away somewhere in my loft). So the discussions were rumbling on. We all know that science proceeds incredibly slowly. It takes more than one observation to convince most people, and the probing and questioning of techniques and possible weak points of any controversial experiment or observation goes on for years – decades, sometimes.

As for Einstein, what we know of his personality tells us that he would have (and did) welcome Eddington’s results without too much examination of the data points. I’d also point out that Einstein exonerated Galileo. In a preface to a modern edition of Galileo’s Dialogue, Einstein wrote, ‘It was Galileo’s longing for a mechanical proof of the motion of the Earth which misled him into formulating a wrong theory of the tides…His endeavors are not so much directed at “factual knowledge” as at “comprehension”.’ I think scientists could teach religious types a thing or two about being generous with forgiveness!

Stephen, thanks for writing on this and using yourself as the object of ridicule for messy science. It is precisely this requirement of science that models, theories, concepts MUST be explained that drives it forward and avoids stagnant orbiting around flawed but convenient ideas. Your structure (as presumably both you and at least a couple of the reviewers understood) requires adjustment of theory. All compelling/quality data are created and should be treated equal. Conundrums must be resolved. Such is science. That is why making up data to satisfy a pre-concieived idea is so destructive. It not only distracts and distorts the field, it forces others to try to reconcile their real data with it. The synthetic data have the advantage of appealing to logic and simple tidiness making all the harder for the new pretenders to depose the false king.

As an aside, I was contacted by Diederik Stapel today following a tweet where I tongue-in-cheek suggested that the best person to talk about his fraud was himself – based on the narcissistic language pervading in the New York Times story. From the email, he clearly feels he deserves such audiences and opportunities to study his transgressions. I can only surmise that he feels his own deviancy can only be effectively explained by himself. Personally, I have heard enough from him and not enough from the many people, including his own trainees, who were directly injured by his actions. This was not a victimless crime and it wasn’t only science that was damaged.

Thanks Jim. I think you are right that it would be good to hear more directly of the impact on those affected by Stapel fraud. Nevertheless, I would be interested also to hear more from the perpetrator himself, as long as it is done in a way that is open to challenge and discussion.

I think there is value in talking to the fraudsters. Am I right in thinking that Stapel is at least relatively unusual in being prepared to say something about what he did? No word has been heard from HM Krishnan Murthy, as far as I am aware, but since he worked in my field of structural biology, I would be particularly interested to understand how he justified his actions to himself.

I agree that we should always explore all sources and converse with the perpetrators. These events should not be buried and accuracy is very important. But, I don’t think it is fair to give them a voice in the absence of their victims – who may well be further injured or pressured to speak. It’s a difficult situation, but Stapel forfeited his rights to an audience by his actions and I feel we must respect the victims in any efforts to further probe his motivations through his own words.

He replied to my reply in which I stated my opinions and therefore I may sound hypocritical. I am interested in his perspective but I also do not wish to give him a public platform. That he is willing to speak does not give him the right to as the many people he threw to the wolves may not be so willing, for good reasons that I respect.

I understand your reservations but on balance think it would be more useful if Stapel’s motivation were subject to more open scrutiny and it seems to me he could contribute usefully to that — assuming, of course, he doesn’t plan to dissemble further.

Owa! I clean forgot Peter Coles’s research on the Eddington experiment – idiotic as I heard him describe it in person last summer. Apologies for not mentioning it. Thanks for the revised post.

P.P.S The really worrying thing is that the Eddington experiment is only one of a number of case studies where physicists hotly dispute allegations of malpractice by philosophers and sociologists of science…there are some good example to be found in the book ‘A House Built on Sand’ by Noretta Koertge

No worries Cormac — we all forget things and I’m sure Peter will forgive you… 😉

Thanks for bringing Koertge’s book to my attention. It’s not an area I know a great deal about though I do have Shapin’s & Shaffer’s ‘Leviathan and the Air pump’ on my reading list.

Hello Micheal Brooks I haven’t read your book yet but I’m looking forward to it! (We met at Harvard.)

Those are interesting comments on Eddington but I think there are two important points not mentioned

1. What about Dyson?

The senior man in the group, Frank Dyson was a world-class observer renowned for his meticulous studies. He was not a particular fan of relativity, or any other new-fangled theory. It was his data that was ulimately used – why would he change the habits of a lifetime or risk his reputation on the first test of a new theory?

2. I think sociologists’ studies of scientific data often ingore the conept of justification. Dyson and Eddington were succeeded by younger men who would have made their reputations by showing the Englishmen wrong, or that they had reached a false conclusion – the opposite happened, no evidence of malpractice ever emerged from the astronomers.

3. It seems Eddington did behave rather strangely in later life – but that has nothing to do with his observational science

I don’t see this debate as an even match between astronomers defending an icon vs sociologists trying to make a point – I see it as sociologists speculating about motivation in the absence of evidence, neglecting to study the scientific data carefully, and neglecting to follow the standard ins and outs of the checks and balances of such measurements.

All of which is fine – what amazes me is that it is the sociologists’ narrative has become the dominant one, while studies that including a reanalysis of the old data have been completely ignored. The Kennefick paper is worth reading again and again, very balanced I think

Regards, Cormac