Full marks and a side order of brownie points for the Royal Society: they have started publishing the citation distributions for all their journals.

This might seem like an unusual and rather technical move to celebrate but it matters. It will help to lift the heavy stone of the journal impact factor that has been squeezing the life out of the body scientific. The Royal Society has now joined the EMBO Journal in committing to be more transparent about the origins of this dubious and troubling metric.

I don’t wish to rehearse the details in full since I have previously described the pernicious effects of scientists’ and publishers’ obsession with journal impact factors and the value of making citation distributions available. But in brief: I hope that the ready availability of these distributions – to show the skew and variation of the citation performance of the papers in any journal – will enable researchers to develop a more sophisticated approach to the evaluation of the work of our peers.

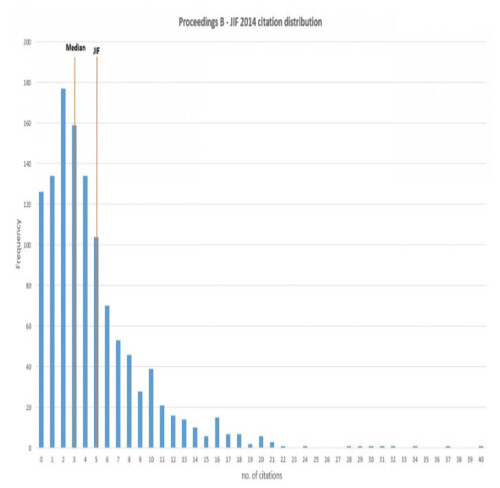

The image below shows the citation distribution for the Royal Society’s Proceedings B journal – you can find the original by clicking on the ‘Citation Metrics’ link in the ‘About Us’ tab. As is the case for every academic journal, it shows that the impact factor, an arithmetic mean, is an indicator that over-estimates average performance and conceals a huge range in citation counts.

As I wrote back in June:

…the IF is a poor discriminator between journals and a dreadful one for papers. Publishing citation distributions therefore directs the attention of anyone who cares about doing evaluation properly back where it belongs: to the work itself.

So three cheers for the Royal Society for having the courage to be so open with these data!

Now: who’s next?

I have been discussing the idea of making citation distributions available with a number of other publishers and have heard some encouraging noises. It seems likely that there will be further significant moves in this direction in the new year, which I will be happy to report.

I dare to be optimistic that before too long the practice may become widespread, and that we may have at our disposal a tool that helps us to do a better job of assessing research and researchers. This is by no means a revolution and we all know that old habits die hard. Even so, this is a step in the right direction and I will take what I can get.

Update (05/12/15, 00:55): Better late than never, but I really should have thanked the Royal Society’s publishing director, Dr Stuart Taylor, for taking this initiative forward.

Update (05/12/15, 01:05): Well I didn’t have to wait long to find out which would be the next journal to join the club. Nature Chemistry’s editor Dr Stuart Cantrill crunched the numbers, posted the distribution and has written up his analysis on the Sceptical Chymist blog. He tells me there’ll be a link to his post from the journal homepage when it’s next updated.

Update (11/12/15, 11:26): Stuart Cantrill has clearly caught the citations distribution bug. He’s now also done a very nice comparative analysis of a selection of chemistry journals. It shows, as expected, that the distributions are all approximately of the same shape and helped to re-infornce the message that journals with low impact factors can be relied on to still have papers that attract large numbers of citations.

Out of interest, how well do the citation numbers fit a Poisson distribution?

I’m going to leave that as an exercise for the reader… 😉

Not strictly on topic for this posting, but knowing of your interest in ‘open access journals’ have you seen this: http://www.jurn.org/#gsc.tab=0

Thanks to Thony Christie and his excellent Whewell’s Gazette for publicising it.

Thanks for the link – I’d not seen that before. What are the advantages over Google Scholar?